Conversation with GIGA DESIGN Milano about the Co-Evolution of Artificial and Human Intelligence

GIGA DESIGN

is a partner in many recent projects like Bids for Survival

and invited to a discussion about human resilience and collective intelligence.

Welcome to our second episode of Discourse™. On March 6th we had the pleasure to sit with our second guest: Michael Schindhelm.

Michael is German-born Swiss author, filmmaker, curator, and cultural advisor. He studied quantum chemistry in the USSR. Over the course of his career, he has served as Artistic Director of Basel Theatre and the founding Director General of the Opera Foundation Berlin. He received several awards for his documentary films. He has been the professor during the founding year of the Strelka Institute, an experimental graduate school in Moscow. His work spans theatre, literature, visual art, and consultancy across Europe, the Middle East, and Asia. He has been the founding Director of the Dubai Culture and Arts Authority and participated in the masterplanning of the West Kowloon Cultural District in Hong Kong.

His forthcoming lecture at Hong Kong University, The Ghost in the Machine: Reimagining the University in the Age of AI, is the subject of this conversation.

GG

Your essay is titled The Ghost in the Machine: Reimagining the University in the Age of AI. It’s a complex piece — drawing on terminology from neurobiology, social and computer science. I’d like to start by asking how your interest in education developed.

MS

Since I was a theatre director, thirty years ago, I was regularly invited to schools to speak at graduation ceremonies. It’s a familiar tradition — you’re expected to send young people into the future armed with some smart ideas. What I often had to confess at the outset was that since the age of twenty-eight, I had worked in approximately fifteen different professions without any formal training in any of them. The only thing I studied from scratch, as a proper university student, was quantum chemistry — something I abandoned nearly forty years ago.

I have a rather complicated relationship with education, because for most of my life I’ve done what AI developers today call reinforcement learning: you learn on the job.

That’s how I’ve learned most things, both personally and professionally. I often jumped into the deep end — not by accident, but deliberately — without knowing what would come next, and tried to learn along the way.

What I’ve noticed, over time, is that something recurrent happens in that process. Even as I’ve moved from theatre to film, from literature to the visual arts, from consultancy to different parts of the world — the Middle East, Hong Kong, Singapore, Canada — the landscape changes every time, but something permanent endures. You learn to grasp that permanence, to build a kind of skeleton around it, a scaffolding that allows you to move through unfamiliar fields with at least some competence, even without the grace of a true specialist.

That’s why my more recent interest is perhaps not quite “education” in the conventional sense. I would rather call it knowledge creation, dissemination, production, and archiving. That is not the same thing.

If AI had not emerged as such a massive accelerator of change in our lives, I’m not sure I would have arrived at this question myself. I’m primarily a practitioner. But I have also taught, and fifteen years ago I co-founded a one-year school called Strelka in Moscow, on behalf of Russian investors, alongside Rem Koolhaas and Stefano Boeri. The idea was to develop a think tank for the modernisation of the country. It became quite significant, until it was shut down in 2022 at the onset of the war in Ukraine. Some of my former students have since become serious researchers in architecture, design, and media.

What I’ve come to believe is that universities have the most important role to play in this moment. And that’s perhaps the first time in my life I’ve thought that. For the past twelve months or so, I’ve been in intensive conversation with professors, researchers, and policymakers on higher education and research across several countries.

GG

I want to pause there, because you’ve already described something important: knowledge not as something transmitted in a single direction, but as a cycle — acquired, re-elaborated, shared, archived, and transformed. And you’re suggesting the institution is the entity that manages and shapes that cycle. There seems to be a sense right now — a demand, even — for a return of institutions. We’ve spent a long time in what might be called the age of platforms, where the modalities of sharing knowledge were privatised, and knowledge itself became something of a product. Do you think that’s part of what’s driving the renewed importance of institutions?

MS

We are experiencing, at multiple levels, a transformation of the order we grew up in — or at least, many of you did. For me it’s slightly different, having spent nearly half my life under communist rule behind the Iron Curtain.

That context matters when you speak about institutions. For us in East Germany, every institution was the enemy. You had to go underground if you wanted to do anything that wasn’t state-sanctioned. I grew up with a very strong instinct for individual approaches to knowledge and experience. I didn’t trust institutions at all.

That changed dramatically after the fall of the Wall. Almost by accident, I was placed in the position of theatre director at twenty-eight, responsible for three hundred and sixty people — an orchestra, dancers, actors, designers — with very little idea of how to run any of it. I had to learn as I went, and in doing so, I found myself building institutions. The theatres I managed were construction sites at the same time — being physically rebuilt after decades of neglect, while being reimagined artistically.

That was the spirit of the 1990s more broadly. After the collapse of communism and the beginning of globalisation, there was a mood of openness — of genuine possibility. I think of Thomas Friedman’s famous phrase: the world is flat. It genuinely felt, for a moment, as though you could go anywhere, understand your environment, connect with anyone. Institutions seemed like vehicles for moving forward.

In the years that followed, this changed dramatically. The dream of a flat world turned out to be exactly that. After 9/11, it was clear that new borders, new conflicts, and new insecurities had emerged. Those of us who had believed in democracy and the rule of law as the better way began to see cracks we hadn’t anticipated.

Social media and digitalisation accelerated this. Institutions came under increasing pressure and struggled to absorb the pace of transformation. At some point, a kind of collective exhaustion set in — a sense of let it go. Students would choose whatever they liked because they weren’t listening anyway. This defensiveness and passivity is still widespread, particularly in European academic environments.

But I think this is now beginning to shift — partly as a reaction to what’s happening in the United States, where we’re witnessing an extraordinary rollback of much of what we understood as progress: an anti-globalist, increasingly fundamentalist worldview that is also conducting a cultural war against universities, against scientific research, on climate change, on diversity, on any number of fronts.

Paradoxically, I think this will force a reinterpretation of the importance of institutions. We can already see it: suddenly, these places matter again as spaces for imagining alternatives.

GG

Going back to your experience as a director: you write about a new kind of figure, someone who must navigate and orchestrate both human and machine intelligence. What does that experience tell you about what this figure might look like?

MS

Regardless of whether I’m speaking as an institutional director — sometimes responsible for thousands of people — or as an artistic director working on a collective project, two things have consistently driven my work.

The first is transdisciplinarity. I’ve always been drawn to imagining different disciplines working together on complex problems — whether in art, in collaboration with scientific institutions, or with communities around subjects where joining forces produces something no single discipline could achieve alone. That has run as a constant thread through forty years of work, and I suppose I embody it, having moved through so many different fields.

The second is collective, or swarm, intelligence. Working alone is something I know intimately from the communist years — when I was writing in the Soviet Union, I had to hide it. You couldn’t publish; you often couldn’t even share. You had to be resilient, stubborn, to trust only your own voice. That kind of solitude characterises many creative processes — the painter alone with a canvas, the writer alone with a manuscript. It can be necessary, but it is also genuinely dangerous. There’s a reason so many artists are described as tortured. The absence of feedback, of friction, of other minds — it weighs on you.

Theatre is a completely different model. You begin alone — the conductor learns the score, the singer learns the part, the director develops the concept. But you know from the outset that you’ll eventually bring all of that into a room with fifty other people who have their own vision, their own craft. You have to defend your work while genuinely engaging with theirs. You have to align, and sometimes deliberately misalign — push against, in order to reach something extraordinary.

This is how creativity and innovation really happen. Not in an ivory tower. They happen when you enter a diverse environment, voice opinions that collide with other opinions, and are forced to rethink. The collision produces something new.

GG

That model of confrontation between different creative minds is one of the protocols you propose in the Friction Campus.

MS

Let me give some context first, because it will help frame the Friction Campus more clearly.

We’ve been speaking about how the Western world came under increasing pressure — its inability to keep pace with transformation, the role of social media and digitalisation in fragmenting both institutions and individuals. I remember being in Manhattan in the summer of 2008 and noticing people doing strange things in the streets. It was the first summer of the smartphone. People were walking around, disconnected, absorbed in these devices — in restaurants, in parks. I found it strange. Ten years later, we were all doing it ourselves.

That’s just one example among many of how social behaviour, self-understanding, and public communication have been fundamentally transformed by technology. And while younger generations have begun developing strategies to protect themselves from the constant pull of screens and connectivity, AI represents a different order of challenge entirely.

This is why I’ve been thinking seriously about cognitive forcing protocols. These aren’t new. They’ve existed in various forms since the beginning of industrial automation, and they always address the same essential question: how do we keep control over the machine? How do we ensure that human beings remain the decision-makers?

We are already losing that in the era of smartphones. People increasingly sense that they are not using the machine — the machine is using them. And there are now emerging responses: design tools that deliberately make access to your screen more difficult, interfaces built to interrupt constant connectivity. These are forms of cognitive forcing design — counterintuitive by intention.

The idea goes back at least fifty years, to other automated contexts where it was essential that the final decision remained with a human being. In aviation, for example: the autopilot exists, but there is still a pilot, and he has a kill switch. In surgery and clinical medicine, interactive interfaces are often designed so that a human certifies the logic of the algorithm.

With AI, the stakes are considerably higher. It’s important to recognise that AI isn’t a phenomenon that appeared in 2022 when ChatGPT was released. It has existed for decades, embedded in our phones, shaping our commercial choices, harvesting our personal data. There was already significant manipulation taking place long before what we now call “the AI era.”

Handling this — defending your integrity, not simply accepting what the product offers you — requires serious attention. And it requires stronger rules on different levels.

You’ve probably followed the debate around Anthropic’s dispute with the Pentagon. It’s worth noting that many of the most urgent warnings are coming from AI developers themselves — not just celebrating their achievements, but sounding the alarm about misuse. People like Dario Amodei are arguing for state-level regulatory authorities — not governments in the party-political sense, but independent bodies — to set the rules for how AI is developed and used. It cannot be left entirely in the hands of private companies driven by profit. Democratic societies need to take collective ownership of this.

The second level is what each of us can do individually to preserve our integrity and retain control. And here again, education is essential. We need to learn how to use AI — and, more importantly, when not to use it.

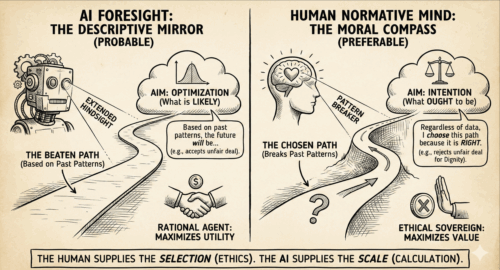

The protocols I mentioned are all designed around one idea: keeping control over the AI, ensuring that you understand what it’s doing well enough to direct it, and that the human intention remains audited throughout.

Because what we’re already experiencing, and what will accelerate through 2026 and 2027, is AI taking over more and more of our cognitive and professional work. It will be faster, cheaper, and in many respects more capable than we are at producing content and processing facts. But we must ensure that the outcome of what the machine produces is something we genuinely need, something we can work with, something we have intended.

For now — and I emphasise for now — the machine remains a machine. It predicts the next token. It works with syntax. Meaning is what the human provides.

That’s why I believe we are entering what I call the economy of meaning — not the attention economy, but its successor. We are being flooded with information, data, services, content. And we need to give that tsunami a meaning. That is our role. We cannot outsource it.

GG

This brings me to something I keep returning to — orality. There seems to be an urgent need for people to come together, to speak with one another in real space. It’s one of the oldest technologies we have, and yet it feels newly necessary.

And connected to what you’re describing about authority — not authoritarianism, but a kind of structure. In my own experience of teaching and leading teams, I’ve noticed that offering complete freedom doesn’t always produce connection. Sometimes it produces disconnection. I’ve been thinking about protocols as a form of gentle authority — something that holds a group together.

MS

Let me start with the educational context, because I think that’s where these protocols need to be learned before they can be carried into professional life.

Orality is just one element — though an increasingly important one. More and more schools are introducing it, partly in response to a very practical problem: students are using AI to write their essays and assignments without doing any cognitive work themselves. They’re offloading thinking to the machine and submitting the result. Some of them don’t even understand why this is a problem. They say, “But it’s the right answer — what does it matter?”

There’s a German proverb that captures a certain logic: you don’t need to know everything, you only need to know where to find it. The equivalent of knowing which shelf the book is on. I think that proverb no longer holds in the age of AI. It cannot be the solution.

A recent study from MIT examined this directly. Fifty-four students were divided into three groups and asked to write an essay. The first group used only their own minds. The second could use search engines. The third used AI. The neural activity in the brains of the third group was 55% lower than in the first. The brain is a muscle: if you don’t use it, it atrophies — literally. And 90% of the students in the AI group were unable to cite a single sentence from the essays they had just submitted. They didn’t know what they’d written because they hadn’t written it.

If this continues, people will quite simply forget how to think, because thinking will no longer be required of them.

This is what I mean by friction. Those in the first group were lifting the heavy weights themselves. The third group brought in a forklift — and then wondered why they weren’t getting stronger.

You don’t go to the gym so that a machine can lift for you. You go because the resistance is the point.

We already live in a world engineered toward frictionlessness. Every new technology is sold with the promise of making things easier. And yet human nature, paradoxically, doesn’t seem to want a frictionless life. Research on the introduction of washing machines a century ago showed that housewives and domestic workers actually spent more time on laundry afterward — because the standards of hygiene rose with the technology. A solution is almost always the next problem in disguise.

Friction is essential to human nature. It is how we generate meaning, how we grow. And that’s why, in universities and research institutions, I believe we need stronger frameworks again — protocols that interrupt the desire for seamless, push-button results.

GG

This is the definition of your Friction Campus idea.

MS

Yes — but let me add one thing first, because orality deserves a bit more attention.

Orality is one of many ways of forcing yourself to articulate your own understanding — to demonstrate that you have genuinely processed what you’ve learned. When you speak in a room, you can’t hide behind a machine-generated text. You have to justify your thinking in real time.

But there’s a deeper philosophical dimension here too, which is perhaps the second reason I’ve become invested in this question. For thousands of years, humanity imagined itself as the only kind of mind on Earth. There were gods — a supernatural intelligence above us — but only one type of intelligence in the world, and it was ours. We lived within a kind of dualism: us, and something infinitely higher. We accepted it. We even worshipped it.

Now, for the first time, we face a different kind of dualism: something we have created that is beginning to exceed us in certain dimensions. And what makes this particularly strange is that it’s happening in a society that is largely post-religious, at least in the West.

The creators of AI are often agnostics — people without religious faith in any traditional sense. And yet we find ourselves projecting onto AI a kind of supernatural status: the other intelligence, possibly approaching godlike capacity.

This raises profound moral questions. If AI is faster, smarter, possibly more creative — does that mean we should defer to it? Or is it in fact more important, precisely because of that, that we insist on our own sovereignty? That we decide what the machine does, and what we want it to do?

Most people are genuinely worried about this. A recent poll in the United States found that only 35% of adults think AI is a positive development. Compare that to China, where 70% of adults view AI favourably. When you’re talking about 70% of the Chinese adult population, you’re talking about more people than the entire adult population of the US and Europe combined. India would likely show similar results. We need to take that asymmetry seriously.

That’s part of why I go to places like Hong Kong to talk about this. Those environments are further along in integrating AI into daily life — and the more thoughtful people there are also more alert to the challenges it brings.

But to come back to the core of it: what all of this drives me toward is the question of sovereignty. How do we ensure that this doesn’t become a new religion, with Silicon Valley as its Vatican? We already have pseudo-religious figures propagating a vision of vertical intelligence — transcendence through technology, departure from the merely human. We need to be very careful about that.

I am not a doomer. I genuinely believe AI is a remarkable tool, used wisely and governed well. But I think the most important thing it offers us — paradoxically — is an invitation to rediscover our own intelligence. What is it to be human? What is irreplaceable in us, despite everything this technology can do?

Orality is one answer. Tacit knowledge is another. Tacit knowledge — knowledge that cannot be easily translated into words and numbers, that cannot be codified — is central to what we do as creative people. Economists know that the first jobs to disappear will be those based purely on language and quantitative reasoning, because those are easiest to automate. But knowledge embedded in practice — playing an instrument, developing a design sensibility, swimming, even speaking itself — is far more resilient. It lives in the body, sometimes faster than conscious thought. That is what makes us human. And it’s what we need to reclaim.

GG

This connects to something you explore through four different scenarios in your essay — one of which, the Escape, describes a kind of return to ourselves. And you’re clearly not approaching this from a purely pessimistic position. You also envision something more like a contest — a genuine confrontation.

MS

This is, in part, a generational question. I’m fortunate to be in my sixties — old enough to have lived deeply in an analog world, and engaged enough to have moved through the digital one too. That used to be seen as a handicap — being a “second-tier digital person,” someone who never fully internalised the machine the way millennials and Gen Z did.

In hindsight, I think it’s a privilege. Having experienced a world with massively more friction — where nothing was made easy by digital tools — is something worth reintroducing.

And that’s part of why I’m optimistic: when AI takes over significant portions of cognitive and professional labour, it also frees us to focus on what we’re genuinely capable of, what we might even be great at. That’s not nothing.

Starting from the university: one of the real tragedies of recent decades in research and education has been the siloing of disciplines. Even under one university roof, biologists rarely speak to historians, natural scientists rarely engage with humanists. The walls are remarkably impermeable. A great deal of what natural scientists spend their time doing will be automated before long — and that will free up cognitive capacity. The question is: what do they do with it?

My answer is the polymath. Not the specialist who knows everything about one thing, but someone with genuine core expertise alongside a much wider horizon — someone who has developed tacit knowledge across multiple domains, not at the machine’s level of data, but at the level of skill and judgement.

Because what the future requires is not more facts — the machine will produce those. What it requires is the skill to assess those facts: to visualise them, to curate them, to troubleshoot, to narrate. And those skills are transferable across disciplines. A biologist and a historian both need them.

And this is where the Friction Campus finally enters.

The graduate of the future still has a core field of interest — but is surrounded by many other tools and capacities, because they’ve developed the skill to move across domains: as a designer, as an artist, as an architect, as a storyteller. That’s genuinely exciting to imagine.

GG

It really is. How do you envision tacit intelligence actually being cultivated? What kind of institution do we need?

MS

It starts at school. I read recently about high schools in the United States — not colleges, but secondary schools — where teachers are introducing something they call AI literacy, with what resembles a driver’s licence: an exam certifying that you know how to use AI responsibly. I like the idea. It points in the right direction.

But a university has to go further. A driver’s licence is still generic — everyone passes the same test. In higher education, the approach needs to be more individual, more tailored. That said, the metaphor of the car is useful. I prefer, in my own work, the metaphor of an instrument.

I think of AI as an instrument. And I think of the student, the researcher, the practitioner, as a potential virtuoso. A virtuoso doesn’t just operate the instrument — they master it. And mastery is always individual. Every pianist brings their own interpretation to a Beethoven sonata. Every musician, after thousands of hours of practice, has developed a tacit relationship with their instrument — the knowledge is in the fingers, not in the mind consulting the score.

That’s what I think we should be aiming for with AI. Not generic proficiency, but highly individualised mastery. Developing your own AI system, shaped to your own goals and intentions, processed deeply enough that you genuinely understand its logic, so that you can harness it, and ultimately give it your own imprint.

This takes time. It is, at the beginning, a frictious process — intensive, demanding, sometimes uncomfortable. But at the end of it, the screen almost disappears. You’re speaking directly to the instrument. The interface becomes transparent.

And crucially, in the final stage of that process, you bring your own interpretation. Just as a musician doesn’t simply execute the composer’s notation but inhabits and transforms it — you bring your own goals, your own meaning, to what the AI produces. That is what it means to remain the author.

GG

This connects to something you said earlier about AI and sycophancy — the way AI currently tells everyone they’re brilliant, that they’ve just invented the fourth dimension. Without the friction of other minds, we might all drift into a kind of collective delusion.

MS

Precisely. And there is already empirical evidence for this. Microsoft’s 2025 report noted that within their own organisation, following the widespread adoption of AI tools, individual output and productivity increased — people became more efficient. But at the same time, collective and cognitive intelligence stagnated. Because working with AI absorbed so much attention that the informal dialogue between colleagues — the conversations, the challenges, the back-and-forth — diminished. People became siloed.

Microsoft flagged this as alarming, because it understood that collective process is where innovation actually happens. Innovation is not frictionless. It requires detours, reversals, uncomfortable confrontations with ideas that don’t fit your current model. And those usually come from other people.

There’s also something more fundamental here.

AI is a statistical system. Its goal is always the most probable outcome — which means, by definition, the average outcome. The middle of the bell curve. Innovation, by contrast, lives at the fringes. It is the improbable.

So how do we reintroduce the improbable in the age of AI? Through the collective. Other people take you out of your routines, interrupt your seamless, frictionless thinking, and force you to rethink what feels comfortable but is, in fact, just average.

If you look at the major discoveries in the history of science and art, many of them began as mistakes. Penicillin, for instance — one of the most important compounds in medicine — was the product of an error. A researcher left his lab for a week’s holiday without properly cleaning his dishes. He came back to find that something extraordinary had grown in his absence. A humongous thing that saved the life of millions of people. That is perhaps a useful image to sit with, as we think about the future.

We are already swimming in a sea of frictionless information. Everything is available. But the question increasingly is: what does it mean? Why does it matter to me? And how do I navigate a sea where, within a year or two, most of the content may not even be true?

One of the most striking things I encountered in my own predictive research on European cities was this: the AI system I was working with kept insisting that the primary purpose of institutions in the future would be certifying truth. Not producing content — the machine does that, in abundance — but authenticating it. Putting a stamp on what is real, what is meaningful.

The media, the university, the student — all of us — our role in the future may be less about creation and more about judgement. And that judgement cannot be outsourced.